Craig DeLancey, Science Fiction Writer

Start the Predator Space Chronicles for free!

Amir Tarkos is one of the only humans in the Predator Corp, the most feared and respected military force in the Galaxy. The Predators seek to prevent and punish lifecrimes: violations against ecosystems. With his partner Bria, a bear-like carnivore, Tarkos is given a dangerous and difficult mission. Deep space probes have located the last surviving Ulltrians, a race that once waged a holy war to force competition among the ecosystems of known space, and as a result nearly extinguished much of the life in the Galaxy. Tarkos and Bria must locate the World Hammer, a pair of co-orbiting sunless planets that are the last refuge of the Ulltrians, and discover the Ulltrian plans for a second attack on the Galactic Alliance.

To save Galactic civilization, Tarkos and Bria will have to work with a team of strange aliens and artificial intelligences, explore the perilous ruins of an ancient world, dare the depths of an ocean that engulfs a dark planet, and fight a fierce battle in the ice rings of a gas giant.

Evolution Commandos: Well of Furies is a stand-alone novel, but it is also the first volume of the seven volume Predator Space Chronicles.

The Predator Space stories are good-old-fashioned space operas with a green theme. If you like Niven or Bujold, you will like the Predator Space stories. But you can try out the world for yourself by listening to the free podcast that introduces the main characters, available at Escape Pod, as episode 333: “Asteroid Monte.” Volume I of The Predator Space Chronicles is available for free at all your favorite bookstores, including:

In a Galaxy at war, one human will join the fight.

Lincoln Finn is having a bad day. He just got expelled from college, and his plans to travel the world are dashed. It seems all his dreams are dying.

But then a spaceship lands in his yard.

Lincoln has been accepted into the Harmonizer Academy, where aliens from across the Galaxy train to become the most elite warriors of a star-spanning Alliance. War has begun, and the survival of Earth depends upon whether the Harmonizers can win their battles.

But first, Lincoln must survive the training. With his “pack”—a group of seven aliens—he is given a daunting first task: to save a primitive species from extinction when their planet is targeted with an asteroid bombardment. But all is not as it seems, and the rag-tag team of candidates must alone confront new enemies of the Alliance. The future of the Alliance, and the survival of all life on Earth, depends upon their success.

Extinction Event is the first book of a six-volume Predator Academy series. Get the book at:

Physicist Ursula Song studies time—and hers has just run out.

One by one, her colleagues and friends are disappearing. Rival mercenaries are hunting her. And strange people follow her wherever she goes. Because Song has opened a door into a new kind of time, and whoever controls her invention will control the destiny of the human race.

Sidewhens is a stand alone novel, but it is also the first book of the Sidewhens Trilogy.

Sidewhens is the first book of the Sidewhens Trilogy.

Predator Space Chronicles Volume 2.

Amir Tarkos is one of the only humans in the Predator Corp, the most feared and respected military force in the Galaxy. With his partner Bria, a bear-like carnivore, Tarkos is on a dangerous and difficult mission to locate the last surviving Ulltrians, a race that once extinguished much of the life in the Galaxy.

With a team of allies, Tarkos and Bria locate and investigate the twin sunless worlds where the last Ulltrians dwell. In an ocean under ice, they must discover the war plans that could destroy the Galactic Alliance, and Earth with it, if the Ulltrians are not stopped. Treachery awaits, and not everyone will survive the twin black worlds in deepest space.

Predator Space Chronicles Volume 3.

Amir Tarkos is one of the only humans in the Predator Corp, the most feared and respected military force in the Galaxy. With his partner Bria, a bear-like carnivore, Tarkos is on a dangerous and difficult mission to fight the Ulltrians, a race that once extinguished much of the life in the Galaxy.

War has begun, but Bria has been accused of murder and treason and Tarkos is suspected to be an accomplice. Only they can save the Alliance, but first they must escape from prison, raise an army of artificial intelligences, and seize control of the most dangerous weapon the Alliance ever created. Volume III of the seven volumes of the Predator Space Chronicles.

Predator Space Chronicles Volume 4.

Tarkos and Bria return to Earth to search for the Ulltrian presence hidden on the planet. But Earth is in turmoil as opposition grows to the plan to join the Galactic Alliance. And, thousands of light years away, a young human girl, Margherita Calvino, has uncovered the secret that will enable the Ulltrians to rule the Galaxy. Tarkos and Bria face the human underground, as Margherita must alone outsmart a hostile alien race.

Omega Threshold: Earthrise is volume IV of the seven volumes of the Predator Space Chronicles..

Predator Space Chronicles Volume 5.

Will the human race survive?

The Ulltrians, ancient warriors long thought extinct, now threaten the galaxy. Earth is next on their list of targets.

The human Amir Tarkos and his bear-like partner Bria are members of the elite Predator Corp, the only military force capable of defeating the Ulltrians.

In the conclusion to Omega Threshold, Tarkos and Bria join forces with a young human girl to thwart the secret invasion of Earth. Volume V of the seven volume Predator Space Chronicles.

Predator Space Chronicles Volume 6.

Question Zero: answer it correctly, and your quest will be fulfilled. Answer incorrectly, and your head will be removed.

The human Amir Tarkos and his bear-like partner Bria are members of the elite Predator Corp, the only military force capable of defeating the dangerous Ulltrians. If they can locate where the Ulltrians have hidden their fleet, they can take the war to the enemy. But the answer lies on the Shroudworld, where rebels from throughout the galaxy have gathered to use mathematics as a weapon. And they want to claim the heads of Tarkos and Bria.

In a thinking labyrinth, when everyone betrays them, Tarkos and Bria have only each other, and their own cunning, to aid them. Can they defeat the most subtle enemies they have ever faced, in a struggle of logic and deception? Volume VI of the seven volume Predator Space Chronicles.

Predator Space Chronicles Volume 7.

The stunning conclusion to The Predator Space Chronicles!

Thousands of years ago, the Ulltrians devastated much of the life in the galaxy. Now they have returned. The human Amir Tarkos and his bear-like partner Bria are members of the elite Predator Corp, and together they have discovered the secrets of the Ulltrian’s devastating new weapon. Now, in the epic conclusion to the Predator Space Chronicles, they bring war to the Ulltrians.

But the key to the Ulltrian weapon is held by one human child, abandoned among humanity’s enemies. Can Tarkos save her and face single combat with an Ulltrian, while the final battle rages around them?

Get the entire seven-volume series of the Predator Space Chronicles in a single huge omnibus!

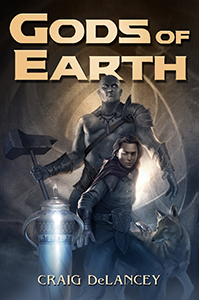

Thousands of years after a war against the gods drove humanity nearly extinct, something divine stirs. It awakens the Guardian, an ancient being pledged to destroy the gods—a task it believes long-accomplished. Through deep caverns, he makes his way to the desolate surface of Earth and stalks toward the last human settlements, seeking the source of this strange power.

Far away, the orphan witch child Chance Kyrien is turning seventeen: today he will be confirmed as a Puriman and learn what stake in the family’s vineyard his adopted father will give him. Ambitious, rebellious, but fiercely devout, Chance dreams only of being a farmer and winemaker, and marrying the girl he loves, the Puriman Ranger Sarah Michaels.

But a shocking cataclysm of violence and destruction turns Chance’s peaceful world upside down. Aided by his loyal friends and the Guardian, Chance must travel through time and space to battle the last remaining god. For the destinies of Chance and this final deity are fatally intertwined, and only one of them can survive.

“Craig DeLancey has created an astonishing, genuinely original fantasy world of exciting action and thought-provoking questions.”

– Hugo and Nebula award-winning author Nancy Kress

For a complete bibliography of Craig’s publications, click here.